To add to everything else that’s been said on TLF about Net neutrality, here is an article I wrote discussing the problems in Chairman Genachowski’s speech of last week. Many NN activists bizarrely think that history proves their argument right, but that is false. The reality is that history shows that when government attempts to regulate in an effort to “create competition,” the opposite often results.

Given this, it was sad to see former Chairman Martin recently endorsing Net neutrality regs (except for wireless). He should know better.

Last Wednesday, Holman Jenkins penned a column in The Wall Street Journal about net neutrality (Adam discussed it here). In response, I have a letter to the editor in today’s The Wall Street Journal:

To the Editor:

Mr. Jenkins suggests that Google would likely “shriek” if a startup were to mount its servers inside the network of a telecom provider. Google already does just that. It is called “edge caching,” and it is employed by many content companies to keep costs down.

It is puzzling, then, why Google continues to support net neutrality. As long as Google produces content that consumers value, they will demand an unfettered Internet pipe. Political battles aside, content and infrastructure companies have an inherently symbiotic relationship.

Fears that Internet providers will, absent new rules, stifle user access to content are overblown. If a provider were to, say, block or degrade YouTube videos, its customers would likely revolt and go elsewhere. Or they would adopt encrypted network tunnels, which route around Internet roadblocks.

Not every market dispute warrants a government response. Battling giants like Google and AT&T can resolve network tensions by themselves.

Ryan Radia

Competitive Enterprise Institute

Washington

To be sure, the market for residential Internet service is not all that competitive in some parts of the country — Rochester, New York, for instance — so a provider might in some cases be able to get away with unsavory practices for a sustained period without suffering the consequences. Yet ISP competition is on the rise, and a growing number of Americans have access to three or more providers. This is especially true in big cities like Chicago, Baltimore, and Washington D.C.

Instead of trying to put a band-aid on problems that stem from insufficient ISP competition, the FCC should focus on reforming obsolete government rules that prevent ISP competition from emerging. Massive swaths of valuable spectrum remain unavailable to would-be ISP entrants, and municipal franchising rules make it incredibly difficult to lay new wire in public rights-of-way for the purpose of delivering bundled data and video services.

Stacey Higginbotham at GigaOm conducted a great interview with Verizon CTO Dick Lynch, in which he endorsed broadband metering:

We believe that you have to be allowed to have a level of service that is not on a public Internet. What you’re suggesting is different kind of IP service that’s not delivered over the public Internet and that needs to be part of the option set in the argument.

http://blip.tv/play/AYGjswkC

Such metering,

if

allowed by Washington, might lessen the need for some of the network management practices that so incense net neutrality fanatics. So I’d really like to see Verizon and other ISPs explore using a “Ramsey two-part tariff,” as Adam has suggested again and again:

A two-part tariff (or price) would involve a flat fee for service up to a certain level and then a per-unit / metered fee over a certain level.

I don’t know where the demarcation should be in terms of where the flat rate ends and the metering begins; that’s for market experimentation to sort out. But the clear advantage of this solution is that it preserves flat-rate, all-you-can-eat pricing for casual to moderate bandwidth users and only resorts to less popular metering pricing strategies when the usage is “excessive,” however that is defined.

ISPs would have an incentive to set the demarcation to a point where, roughly, the vast majority of users would never have to worry about their usage, but the small percentage of bandwidth hogs would have a real disincentive to cut back on bandwidth use—thus avoiding the “Tragedy of the Commons,” which is really the “Tragedy of the Unmetered Commons,” as I noted a year ago.

In a week in which neutrality regulation is making a lot of news, I hope that Robert Hahn and Hal Singer’s terrific new study, “Why the iPhone Won’t Last Forever and What the Government Should Do to Promote its Successor” gets some attention. It provides a wonderful overview of how dynamically competitive the mobile marketplace has been over the past two decades and why critics are wrong to get worked up about the short-term “dominance” of Apple’s iPhone. Here’s the abstract of their paper:

Because of the overwhelming, positive response to the iPhone as compared to other smart phones, exclusive agreements between handset makers and wireless carriers have come under increasing scrutiny by regulators and lawmakers. In this paper, we document the myriad revolutions that have occurred in the mobile handset market over the past twenty years. Although casual observers have often claimed that a particular innovation was here to stay, they commonly are proven wrong by unforeseen developments in this fast-changing marketplace. We argue that exclusive agreements can play an important role in helping to ensure that another must-have device will soon come along that will supplant the iPhone, and generate large benefits for consumers. These agreements, which encourage risk taking, increase choice, and frequently lower prices, should be applauded by the government. In contrast, government regulation that would require forced sharing of a successful break-through technology is likely to stifle innovation and hurt consumer welfare.

“New technologies often seemingly emerge from nowhere, but also frequently lose their luster quickly,” Hahn and Singer go on to argue. As evidence they cite the recent examples of Second Life and MySpace, which were hyped as potentially become dominant providers in their respective areas just a few years ago, but now are subjected to intense competition. “[T]he the mobile handset market is subject to these same disruptive forces,” they argue: Continue reading →

Adam Thierer and I have warned that neutrality regulation, once imposed on broadband providers, will

extend to other Internet services wherever “gatekeepers” are alleged to control access to a platform used by others. In short, the slippery slope of creeping common carriage is real and we’re already heading down it, with cyber-collectivist “luminaries” like Jonathan Zittrain and Frank Pasquale demanding neutrality regulation for devices, application platforms like iTunes and Facebook, and search!

TLF Reader Jim Reardon made a particularly astute observation on my post asking whether Americans really want net neutrality regulation:

Regulation of any service, product or industry is preceded by definition. Once defined, it is subject to taxation.

[Net Neutrality regulation] is a prelude to taxation of Internet products and services. It will likely start with telephony services and proceed accordingly to financial services, and continue from there.

As such, the activity is essentially neutral insofar as technology innovation is concerned — so long as applicable taxes are paid the government will ensure that the service is not disfavored by the network operators.

Absolutely right! One of the greatest barriers to government regulation and taxation of the Internet today is the lack of clear definitions: The FCC rules will tell you precisely what “cable television” or “commercial radio” mean, but the concepts of “social networking,” “Internet video,” “blogging,” and even “search” are indeterminate and constantly evolving.

Ronald Reagan once quipped:

Government’s view of the economy could be summed up in a few short phrases: If it moves, tax it. If it keeps moving, regulate it. And if it stops moving, subsidize it.

Fortunately, government’s

ability to implement this view depends—to paraphrase President Clinton—”on what the meaning of the word ‘is’ ‘it’ is”: Allowing “it” to remain beautifully amorphous may be the best way to keep government at bay.

Whatever you think about this messy dispute between AT&T and Google about how to classify web-based telephony apps for regulatory purposes — in this case, Google Voice — the key issue not to lose site of here is that we are inching ever closer to FCC regulation of web-based apps! Again, this is the point we have stressed here again and again and again and again when opposing Net neutrality mandates: If you open the door to regulation of one layer of the Net, you open up the door to the eventual regulation of all layers of the Net.

You might not buy that story initially but if you doubt it then I invite you to read just about any history of American broadcast media regulation over the course of the past seven decades. (You might want to start with Krattenmaker & Powe’s Regulating Broadcast Programming or Jonathan Emord’s

Freedom, Technology, and the First Amendment). In such histories you will find a common theme: Once regulation of media and communications platforms gets underway, the natural progression of things is uni-directional — Up! That is, when new questions arise about how to “deal with” a new service, network, platform, or technology, the general tendency is the “regulate up” instead of “deregulating down.” When regulators are given a greater say about the contours of markets as technologies evolve and/or converge, we shouldn’t be surprised that their first instinct is to “bring them into the fold.”

And, sadly, that is exactly what is likely to occur eventually with Google Voice. The only really interesting question is what else regulators start mucking with in the search and applications layer once they get their hands on it. And if you still insist that I am being overly paranoid about “regulatory creep” and the prospect of the FCC gradually transforming into the Federal Information Commission, then consider what the agency had to say about cloud computing in paragraph 60 (pg. 21) of the FCC’s recent Wireless Innovation and Investment Notice of Inquiry, which was launched on August 27th: Continue reading →

FOXNews.com has just published an editorial that I penned about Monday’s net neutrality announcement from the FCC.

by Ryan Radia

The federal government may gain broad new powers to regulate Internet providers next month if Federal Communications Commission Chairman Julius Genachowski gets his way. In a milestone speech on Monday, Genachowski proposed sweeping new regulations that would give the FCC the formal authority to dictate application and network management practices to companies that offer Internet access, including wireless carriers like AT&T and Verizon Wireless.

providers next month if Federal Communications Commission Chairman Julius Genachowski gets his way. In a milestone speech on Monday, Genachowski proposed sweeping new regulations that would give the FCC the formal authority to dictate application and network management practices to companies that offer Internet access, including wireless carriers like AT&T and Verizon Wireless.

Genachowski’s proposed rules would make good on a pledge that President Obama made in his campaign to enshrine net neutrality as law. The announcement was met with cheers by a small but vocal crowd of activists and academics who have been pushing hard for net neutrality for years. But if bureaucrats and politicians truly care about neutrality, they would be wise to resist calls to expand the government’s power over private networks. Instead, policymakers should recognize that it is far more important for government to remain neutral to competing business models — open, closed, or any combination thereof.

Continue reading →

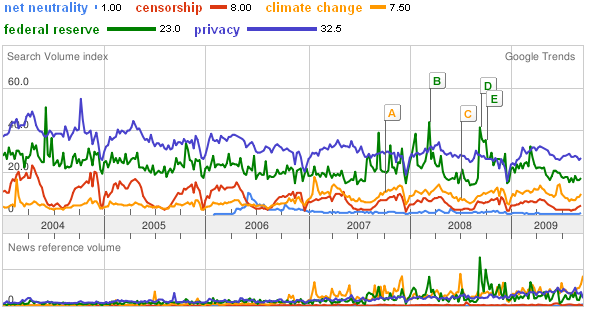

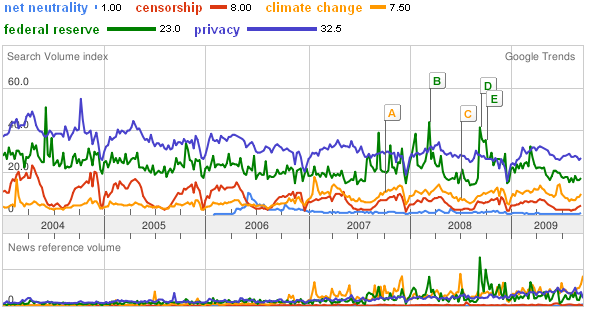

Those who advocate regulating Internet service providers as common carriers subject to “open access” mandates (a/k/a “Net Neutrality”) want us to believe that their cause is the “Civil Rights” issue of the digital age, with huge popular support and opposed only by self-interested cable companies and their henchmen. In fact, such regulations would actually harm consumers, increase broadband prices, retard the heretofore-explosive growth of bandwidth, and dramatically increase government control over the Internet. Of course, the degree of public interest in a cause doesn’t actually tell us anything about its justice and, fortunately, we live in a democratic oligarchic republic, not a pure democracy. But it’s worth asking whether Americans are really up in arms about the need for “Net Neutrality” regulations. Google Trends suggests not:

This kind of comparison should dispel once and for all the myth of a popular groundswell for net neutrality regulation—especially since online search volumes heavily over-represent the interests of the digerati, thus over-stating general interest in web-related topics.

This kind of comparison should dispel once and for all the myth of a popular groundswell for net neutrality regulation—especially since online search volumes heavily over-represent the interests of the digerati, thus over-stating general interest in web-related topics.

In fact, “Net Neutrality” regulation is a

niche cause trumpeted incessantly by the blogosphere with about the same level of broad popular interest online as “housing rights”—a topic about which most of us probably don’t often fall into conversation (unless we happen to live in Bakuninist Berkeley or the Bolivarian Caliphate of Cambridge, MA, ground-zero of American Chavismo). Continue reading →

Holman Jenkins has a stinging editorial in today’s Wall Street Journal entitled, “Neutering the ‘Net,” which borrows a term that my friend Randy May coined long ago to describe what net neutrality regulation will ultimately accomplish. What I like best about the Jenkins essay was the way he exposed Free Press for their hypocrisy over metering as a possible alternative approach to network management, something I documented in this piece and this piece about their new-found love of Internet price controls. Here’s how Jenkins puts it in his essay today:

The mask really slipped earlier this year when Time Warner Cable began experimenting with usage-based pricing to protect the average broadband customers from the 20% of users who create 80% of the traffic. A lobby called Free Press, the most extreme of the pro-net neutrality interests, went ballistic, calling metered pricing a “price-gouging scheme” and backing a bill in Congress to ban it.

Never mind that Free Press had previously argued just the opposite, saying usage-based pricing was a fairer way to deal with congestion than, say, by selectively slowing down file-sharing sites that gobble up disproportionate broadband capacity. Never mind, too, the irony that the net-neut campaign against the selective slowing of non-urgent traffic has left only differential pricing as a way to bring a modicum of efficiency to network usage.

Indeed. Of course, we should expect nothing less from the neo-Marxist media reformistas as the UnFree Press.

Leviathan Spam

Send the bits with lasers and chips

See the bytes with LED lights

Wireless, optical, bandwidth boom

A flood of info, a global zoom

Now comes Lessig

Now comes Wu

To tell us what we cannot do

The Net, they say,

Is under attack

Stop!

Before we can’t turn back

They know best

These coder kings

So they prohibit a billion things

What is on their list of don’ts?

Most everything we need the most

To make the Web work

We parse and label

We tag the bits to keep the Net stable

The cloud is not magic

It’s routers and switches

It takes a machine to move exadigits

Now Lessig tells us to route is illegal

To manage Net traffic, Wu’s ultimate evil Continue reading →

Continue reading →

providers next month if Federal Communications Commission Chairman Julius Genachowski gets his way. In a milestone speech on Monday, Genachowski proposed sweeping new regulations that would give the FCC the formal authority to dictate application and network management practices to companies that offer Internet access, including wireless carriers like AT&T and Verizon Wireless.

providers next month if Federal Communications Commission Chairman Julius Genachowski gets his way. In a milestone speech on Monday, Genachowski proposed sweeping new regulations that would give the FCC the formal authority to dictate application and network management practices to companies that offer Internet access, including wireless carriers like AT&T and Verizon Wireless.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.