Articles by Adam Thierer

Senior Fellow in Technology & Innovation at the R Street Institute in Washington, DC. Formerly a senior research fellow at the Mercatus Center at George Mason University, President of the Progress & Freedom Foundation, Director of Telecommunications Studies at the Cato Institute, and a Fellow in Economic Policy at the Heritage Foundation.

Senior Fellow in Technology & Innovation at the R Street Institute in Washington, DC. Formerly a senior research fellow at the Mercatus Center at George Mason University, President of the Progress & Freedom Foundation, Director of Telecommunications Studies at the Cato Institute, and a Fellow in Economic Policy at the Heritage Foundation.

It is my great pleasure to welcome Steve Titch as a contributor to the Technology Liberation Front. Like me, Steve has some journalism blood in his background but came to find that think tank hours were much better (even if the pay isn’t)! He has been a telecom and IT policy analyst for the Reason Foundation since 2005 and you can find a collection of his past work with Reason here.

It is my great pleasure to welcome Steve Titch as a contributor to the Technology Liberation Front. Like me, Steve has some journalism blood in his background but came to find that think tank hours were much better (even if the pay isn’t)! He has been a telecom and IT policy analyst for the Reason Foundation since 2005 and you can find a collection of his past work with Reason here.

Previously he was a senior fellow at the Heartland Institute and managing editor of Heartland IT and Telecom News. He has published research reports and editorials on a wide array of issues that are of interest to TLF readers, including: municipal broadband, network neutrality, universal service and telecom taxes. We very much look forward to his contributions here.

Welcome to the TLF, Steve!

by Adam Thierer & Berin Szoka

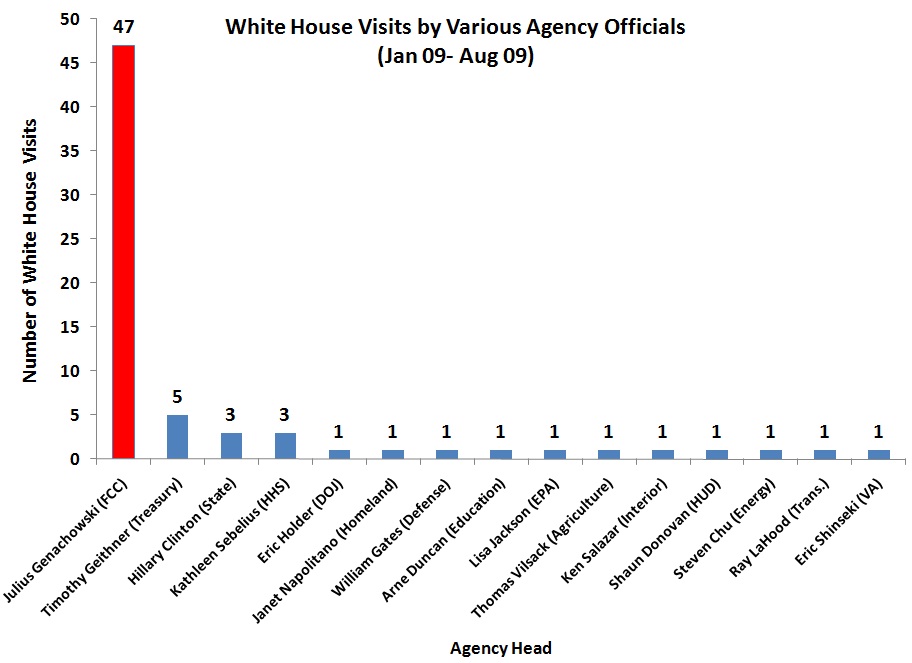

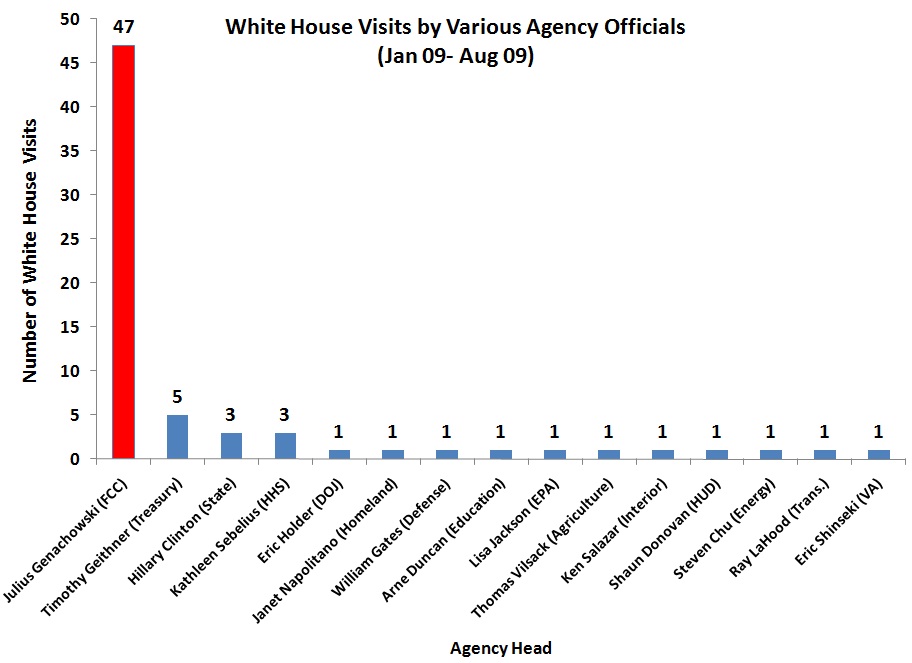

Move over, health care reform, climate change, and the economy. Judging by White House visits by various government agency heads, the Obama administration instead appears preoccupied with the re-regulation of communications, media, and the Internet. The Administration has just released logs of all visitors to the White House and Executive Office Buildings from Obama’s inauguration through August—including a staggering 47 visits by Federal Communications Commission (FCC) Chairman Julius Genachowski. By contrast, no other major agency head logged more than five visits. Chairman Genachowski obviously has an audience with those at the highest levels of power, including the President himself, but this raises questions about just how “independent” this particular regulator and his agency really are.

Unprecedented Transparency by White House

The Administration deserves credit for releasing these visitor logs, which offer unprecedented transparency into the White House’s workings. Unfortunately, the logs lack visitors’ affiliation and title, making it difficult to discern subtle patterns. Furthermore, each entry indicates only one “visitee” and the total number of people involved. Full disclosure requires identifying all meeting participants. Nonetheless, President Obama’s gesture is a great first step toward improved government accountability.

This openness allows us to ask questions we couldn’t pose for previous administrations—such as why the FCC head seems to have unparalleled access to the White House. Lacking data from previous administrations, it’s difficult to make direct comparisons with previous FCC Chairmen, but the sheer number of visits by Chairman Genachowski leaves no doubt about his uniquely close involvement with the White House. Continue reading →

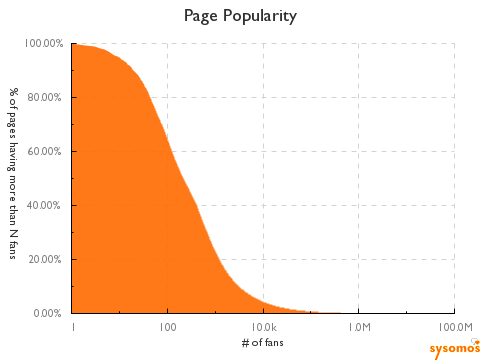

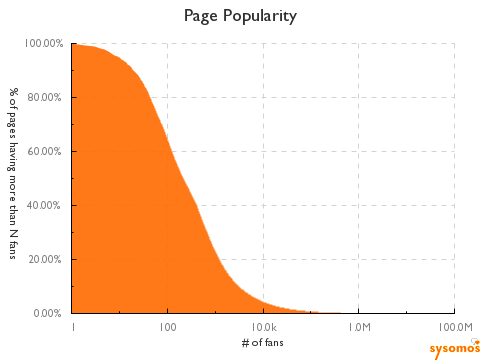

Perfect media equality is impossible. There has never been anything close to “equal outcomes” when it comes to the distribution or relative success of old media: books, magazines, music, movies, book, theater tickets, etc. A small handful of titles have always dominated, usually according to a classic “power law” or “80-20? distribution, with roughly 20% of the titles getting 80% of the traffic / revenue.

Perfect media equality is impossible. There has never been anything close to “equal outcomes” when it comes to the distribution or relative success of old media: books, magazines, music, movies, book, theater tickets, etc. A small handful of titles have always dominated, usually according to a classic “power law” or “80-20? distribution, with roughly 20% of the titles getting 80% of the traffic / revenue.

But here’s the really interesting thing:

This trend is increasing, not decreasing, for newer and more “democratic” online media. As I pointed out in two previous essays [“YouTube, Power Laws & the Persistence of Media Inequality” & “Cuban on Fragmentation & Attention in the Blogosphere (or Why Power Laws Really Do Govern All Media)”], there is solid evidence that blogs, YouTube, Twitter, and other digital media outlets and platforms not only follow a classic power law distribution but that the distribution is even more heavily skewed toward the “fat head” of the distribution curve, not “the long tail” of it.

The latest evidence of the persistence of power laws across media comes from Facebook. Erick Schonfeld has a new essay up at TechCrunch (“It’s Not Easy Being Popular. 77 Percent Of Facebook Fan Pages Have Under 1,000 Fans“) highlighting some new findings from an upcoming report by Sysomos, a social media monitoring and analytics firm. Here’s the summary from Schonfeld: Continue reading →

The Internet is massive. That’s the ‘no-duh’ statement of the year, right? But seriously, the sheer volume of transactions (both economic and non-economic) is simply staggering. Consider a few factoids to give you a flavor of just how much is going on out there:

- In 2006, Internet users in the United States viewed an average of 120.5 Web pages each day.

- There are over 1.4 million new blog posts every day.

- Social networking giant Facebook reports that each month, its over 300 million users upload more than 2 billion photos, 14 million videos, and create over 3 million events. More than 2 billion pieces of content (web links, news stories, blog posts, notes, photos, etc.) are shared each week. There are also roughly 45 million active user groups on the site.

- YouTube reports that 20 hours of video are uploaded to the site every minute.

- Amazon reported that on December 15, 2008, 6.3 million items were ordered worldwide, a rate of 72.9 items per second.

- Every six weeks, there are 10 million edits made to Wikipedia.

Now, let’s think about how some of our lawmakers and media personalities talk about the Internet. If we were to judge the Internet based upon the daily headlines in various media outlets or from the titles of various Congressional or regulatory agency hearings, then we’d be led to believe that the Internet is a scary, dangerous place. That ‘s especially the case when it comes to concerns about online privacy and child safety. Everywhere you turn there’s a bogeyman story about the supposed dangers of cyberspace.

But let’s go back to the numbers. While I certainly understand the concerns many folks have about their personal privacy or their child’s safety online, the fact is

the vast majority of online transactions that take place online each and every second of the day are of an entirely harmless, even socially beneficial nature. I refer to this disconnect as the “problem of proportionality” in debates about online safety and privacy. People are not just making mountains out of molehills, in many cases they are just making the molehills up or blowing them massively out of proportion. Continue reading →

In a recent PFF paper I argued that “We Are Living in the Golden Age of Children’s Programming,” and showed how, despite incessant complaints by many policymakers:

In a recent PFF paper I argued that “We Are Living in the Golden Age of Children’s Programming,” and showed how, despite incessant complaints by many policymakers:

the overall market for family and children’s programming options continues to expand quite rapidly. Thirty years ago, families had a limited number of children’s television programming options at their disposal on broadcast TV. Today, by contrast, there exists a broad and growing diversity of children’s television options from which families can choose.

I then documented there and in my book, Parental Controls & Online Child Protection:

- the many excellent family- or child-oriented networks available on cable, telco, and satellite television today;

- the growing universe of religious / spiritual television networks;

- the many family or educational programs that traditional TV broadcasters offer; or

- the massive market for interactive computer software or Internet websites for children.

And every time I turn around I find another great show, service, or site for families to choose from. Continue reading →

Around this time last year, a relative 20 years my senior was asking me what I was writing about and I mentioned how I’d been collecting anecdotes and stats for what was becoming our “Cutting the Video Cord” series here. That series has documented how the Internet and new digital media options are displacing traditional video distribution channels. We’ve been exploring what that means for consumers, regulators and the media itself.

Around this time last year, a relative 20 years my senior was asking me what I was writing about and I mentioned how I’d been collecting anecdotes and stats for what was becoming our “Cutting the Video Cord” series here. That series has documented how the Internet and new digital media options are displacing traditional video distribution channels. We’ve been exploring what that means for consumers, regulators and the media itself.

I asked this relative of mine if they spent any time watching their favorite shows, or even movies, online or through alternative means than just their cable or satellite subscription. He said he didn’t because of the lack of an easy way to find all their favorite shows quickly. Specifically, he lamented the lack of a good “TV Guide” for online video. I explained to him that, for most of us 40 and under, our “TV Guide” was called “a search engine”! It’s pretty easy to just pop in any show name or topic into your preferred search engine and then click on “Video” to see what you get back. Nonetheless, I had to concede that random searching for video wouldn’t necessarily be the way everyone would want to go about it. And it wouldn’t necessarily organize the results in way viewers would find useful–grouping things thematically by genre or offering the sort of related programming you might be interested in seeing.

Well, good news, such a service now exists. Katherine Boehret of the

Wall Street Journal brought “Clicker.com” to my attention in her column last night, a terrific new (and free) video search service: Continue reading →

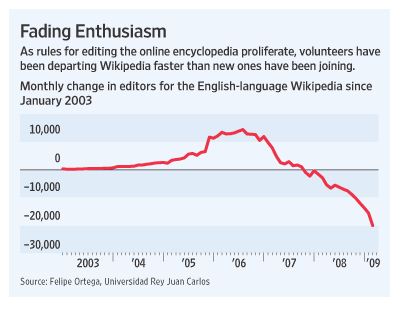

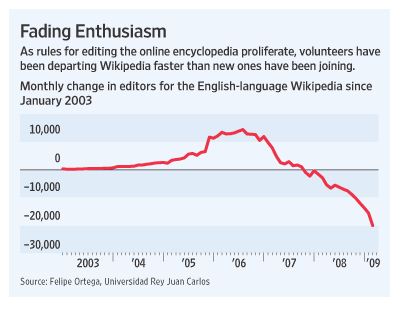

There was a very interesting front-page article in the Wall Street Journal yesterday by Julia Angwin and Geoffrey Fowler wondering whether Wikipedia, the wildly popular online encyclopedia, was dying because of new posting guidelines which have apparently led to a drop off in the number of volunteers contributing to the site. In their article (“Volunteers Log Off as Wikipedia Ages“), Angwin and Fowler note that:

There was a very interesting front-page article in the Wall Street Journal yesterday by Julia Angwin and Geoffrey Fowler wondering whether Wikipedia, the wildly popular online encyclopedia, was dying because of new posting guidelines which have apparently led to a drop off in the number of volunteers contributing to the site. In their article (“Volunteers Log Off as Wikipedia Ages“), Angwin and Fowler note that:

In the first three months of 2009, the English-language Wikipedia suffered a net loss of more than 49,000 editors, compared to a net loss of 4,900 during the same period a year earlier, according to Spanish researcher Felipe Ortega, who analyzed Wikipedia’s data on the editing histories of its more than three million active contributors in 10 languages. Eight years after Wikipedia began with a goal to provide everyone in the world free access to “the sum of all human knowledge,” the declines in participation have raised questions about the encyclopedia’s ability to continue expanding its breadth and improving its accuracy. Errors and deliberate insertions of false information by vandals have undermined its reliability.

The article suggests that new posting and editing guidelines may have something to do with the drop:

But as it matures, Wikipedia, one of the world’s largest crowdsourcing initiatives, is becoming less freewheeling and more like the organizations it set out to replace. Today, its rules are spelled out across hundreds of Web pages. Increasingly, newcomers who try to edit are informed that they have unwittingly broken a rule — and find their edits deleted, according to a study by researchers at Xerox Corp. “People generally have this idea that the wisdom of crowds is a pixie dust that you sprinkle on a system and magical things happen,” says Aniket Kittur, an assistant professor of human-computer interaction at Carnegie Mellon University who has studied Wikipedia and other large online community projects. “Yet the more people you throw at a problem, the more difficulty you are going to have with coordinating those people. It’s too many cooks in the kitchen.”

Let’s say it’s true that the new guidelines have resulted in fewer people contributing. Is that that automatically a bad thing? I suppose it depends on other variables that are harder to measure. Namely, quality metrics. This is where every discussion about Wikipedia gets sticky. Continue reading →

Free Press, the radical pro-regulatory media activist group, recently filed comments with the Federal Trade Commission (FTC) for the agency’s upcoming workshop on “How Will Journalism Survive the Internet Age?” The Free Press comments provide an enlightening glimpse into the mind of how many on the Left now think about media policy in America. Their approach can be summarized as follows:

- Nothing the private sector can do will save journalism (unless it is entirely non-profit / non-commercial in nature);

- Even if there was something that private players could do to save journalism, Free Press would likely have federal authorities forbid it anyway (especially if it involved new business ownership patterns or combinations); and,

- The only thing that can really save journalism is a “public option” for the press in the form of massive state subsidization of media in this country.

To elaborate on the last point, here’s how Free Press summarizes what they are looking for:

For U.S. public media to become a truly world-class system will require a substantial increase in funding. This could be accomplished by an increase in direct congressional appropriations to the Corporation for Public Broadcasting. With increased funding — to as little as $5 per person, increasing annual appropriations to some $1.5 billion — the American public media system could dramatically increase its capacity, reach, diversity and relevance.

But they stress that a simple expansion of the PBS/NPR/CPB non-commercial model will not be enough since that system is “vulnerable to repeated threats of funding cuts” and too “reliant on corporate backing, via the underwriting process.” They want to go well beyond

non-commercial media, therefore, and have the state start building a massive public media infrastructure. Here’s where their pitch for a public option for the press comes in: Continue reading →

Arik Hesseldahl has an interesting piece in

Business Week about Apple’s control of the iPhone App approval process in which he asks: “Is a smartphone gatekeeper needed?” Plenty of people don’t think so and have raised a stink about Apple trying to play that role for the iPhone. It certainly could be true, as some critics suggest, that Apple is being too heavy-handed on occasion when rejecting apps, but it’s always easy for those of us on the outside of the process to think that. Hesseldahl notes that:

it’s tempting to consider the implications of a less hands-on approach, as is the case with Macs, Microsoft (MSFT) Windows PCs, or other smartphones, including those running the Google (GOOG)-backed Android operating system. The software market for personal computing has existed in this way for nearly three decades, and while there have certainly been some problems along the way, I’d argue that overall we’re better off without Microsoft or Apple or some other organization approving software applications before they’re released to the market. PC users have learned to be careful about what they put on their computers through unhappy trial and error.

But he also notes that there is another side to the story: Continue reading →

It’s truly amazing how fast mobile broadband demand is expanding. A couple of things caught my eye yesterday that really drove that home. First, I was reading Bernstein Research’s weekly (subscription-only) newsletter and Craig Moffett, one of America’s top media and communications analysts, summarized the growing mobile bandwidth crunch as follows:

To fully grasp the challenge facing wireless providers as we make the transition from wireless voice to wireless data, it is helpful to put some ballpark numbers around current usage levels. Today, the average voice-only customer consumes something like 50 megabytes of data every month. For that, they pay about $40, or about $0.80 per megabyte. That’s 70% of wireless industry revenues. Text messaging generates another $10 per month for a minuscule amount of data (in fact, arguably no throughput at all, since text messaging travels in a signaling band rather than in the carrier band itself). Let’s call it $1,000 per megabyte. That’s another 15% of industry revenues. On a blended basis, then, that’s $1.00 per megabyte for 85% of industry revenues.

And then there’s the iPhone. By some estimates, the average iPhone user consumes as much as 800 megabytes per month. Take out their 50 Mb for voice and you’re looking at 750 Mb of data… for an additional $30. For the mathematically challenged, that’s a princely sum of… wait for it… four cents per megabyte. Worse, we noted that the FCC’s wireless net neutrality policies posed the risk of “bandwidth arbitrage,” where low bandwidth services (at $1.00 per megabyte) would be replaced with free or almost free applications that ride on $0.04 per megabyte data plans, and where carriers’ hands would be tied to prevent it. Taking a business that is currently getting $1.00 per megabyte down to just $0.04 per megabyte is, well, hard. And lest anyone think that this threat is idle fear-mongering, Google’s acquisition last week of Gizmo5, a wireless VoIP specialist, should give one pause.

Those are stunning numbers. And then I saw this new filing by CTIA listing some other statistics about growing mobile broadband demand:

Continue reading →

It is my great pleasure to welcome Steve Titch as a contributor to the Technology Liberation Front. Like me, Steve has some journalism blood in his background but came to find that think tank hours were much better (even if the pay isn’t)! He has been a telecom and IT policy analyst for the Reason Foundation since 2005 and you can find a collection of his past work with Reason here.

It is my great pleasure to welcome Steve Titch as a contributor to the Technology Liberation Front. Like me, Steve has some journalism blood in his background but came to find that think tank hours were much better (even if the pay isn’t)! He has been a telecom and IT policy analyst for the Reason Foundation since 2005 and you can find a collection of his past work with Reason here.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.