Adding to the general clamor, Scott Wallstein of the Progress and Freedom Foundation has released an analysis of TIm Wu’s study endorsing neutrality regulation of wireless networks. Among other things, he points out that the history of the kind of access regulations Wu endorses is not a happy one, pointing to the UNE fiasco. He also raises a good point regarding Wu’s call for carriers to work together to create clear unified standards–arguing that getting acceptable standards is harder than Wu implies. In fact, Wallstein points out, Wu decries one of the leading standards that has been developed, WAP.

Overall, Wallstein concludes that competition in this market is healthy, stating that “the wireless industry is robustly competitive and exhibits scant evidence of a market failure. Consumers consistently benefit from increasingly lower prices and more features.”

Worth reading.

Last year, as the net neutrality wars raged, the wireless industry by and large stayed out of the line of fire. It firmly opposed regulation, no question of that, but the debate almost exclusively focused on telephone companies and cable company broadband services. Now here comes Tim Wu–a prof at Columbia University and father of the term “net neutrality”–with a paper (to be presented this week to the FTC) arguing that wireless telephone service should be comprehensively regulated under net neutrality principles based on those applied the old Bell System.

This is a major escalation of the neutrality war, promising to change the character and dynamics of the entire debate.

I have always thought that one of the weak points of the argument for network neutrality last year was its over breadth. At its core, the argument for neutrality regulation boils down to a claim that broadband markets are not competitive. Yet, proposals for regulation virtually–no, skip the virtually, they never made exceptions for competitive elements of this market–such as wireless broadband.

Certainly, I thought, it wouldn’t be too long before someone proposed carving out wireless from regulation, so as to focus public attention away from this innovative and fast-growing market. Instead, Wu’s paper goes in the opposite direction–arguing that the wireless industry is in dire need of regulation.

It’s a bold argument. But completely wrong. And, it the end, one that may hurt the entire neutrality regulation effort, by showing how–once unleashed–few will be safe from its reach.

Continue reading →

How does Columbia Law Professor Tim Wu justify his proposal to impose massive new regulation on cellphone networks? His forthcoming paper, “Wireless Net Neutrality,” which Jerry discusses here, proposes regulating cellphone networks like common carriers:

(1) Allow any consumer to attach any safe device to their cellphone, and

(2) use the applications of their choice and view the content of their choice.

(3) Require cellphone providers to disclose, fully, prominently, and in plain English, the following information: Limits on bandwidth usage; Devices that are locked to a single network; and * Important limitations placed on features; and

(4) reevaluate their “walled garden” approach to application development, and work together to create clear and unified standards to which developers can work.

“Oligopoly”

Wu acknowledges critics will point to vibrant competition in the cellphone industry as the main reason we don’t need this. Although most people don’t, Wu completely dismisses the extreme competitiveness of the industry. According to him, it’s a “textbook oligopoly with four major players, premised on a bottleneck resource.”

Well, in an oligopoly prices could be expected to rise, service deterioriate and innovation suffer. But this is precisely the opposite of what we see in the cellphone space. Cellphones used to be a luxury item, now they’re ubiquitous. The FCC noted that during the five-year period ending in June 2002, the number of cellphone subscribers increased from 48.7 million to 134.6 million. This is a result of falling prices, improved coverage and the introduction of new features. None of this would have happened if the market were broken.

Wu’s oligopoly argument rests on two faulty premises:

Continue reading →

Tim Wu will be presenting his paper “Wireless Net Neutrality” at an FTC workshop on network access tomorrow on Wednesday. (BTW: The workshop is free and open to the public.) Basically he’s arguing for Carterfone to be applied to the cell phone industry. The Washington Post has a write-up of the ideas behind the paper and reaction from both sides of the debate.

Until federal regulators issued a landmark ruling in 1968, Americans could not own the telephones in their homes, nor attach answering machines or other devices to them. Now, a growing number of academics and consumer activists say it’s time to deliver a similar groundbreaking jolt to the cellphone industry, possibly triggering a new round of customer options and technical innovations to rival the one that produced faxes, modems and the Internet.

Wireless carriers, which limit what customers may do with their phones, say the move is unnecessary and potentially harmful. But in articles, blogs and speeches, a number of researchers are asking why the companies are allowed to force consumers to buy new handsets when they change carriers, pay a specified carrier to transfer photos from a camera phone, or download ring tones or music from one provider only.

Carterfone was a great decision when it applied to Ma Bell, the quintessential monopoly, and wouldn’t compute for today’s wireless carriers. True, cell phones are locked (except when they’re not, as the article points out, because carriers will often unlock them for you when your contract expires). The one thing the article doesn’t mention is that cell phones are also subsidized. You can always buy an unlocked phone for a premium. I would love to see a greater market in unlocked phones, but if there’s no demand from consumers, I’ll just have to wait along with the proponents of regulation. Question: Unlocked phones are the norm in Asia and Europe. How are they priced there? How do service plan prices compare to U.S.?

In response to my post on network prioritization on Tuesday, Christopher Anderson left a thoughtful comment that represents a common, but in my opinion mistaken, perspective on the network discrimination question:

It’s great for a researcher building a next generation network to simply recommend adding capacity. This has been the LAN model for a long time in private internal networks and LANs with Ethernet have grown in orders of magnitude. This is how Ethernet beat out ATM in the LAN 10 years ago or so. Now that those very same LANs are deploying VOIP they wish for some ATM features such as proritization. And now they add that prioritization (‘e’ tagging for example) to deploy VOIP more often than they upgrade the whole thing to 10G.

There are however business concerns in the real world. Business concerns such as resource scarcity and profit. In a ISP business model, users are charged for service. If usage goes up, but subscriptions do not (e.g. average user consumes more bandwidth) there is financial motivation to prioritize or shape the network, not to add capacity and sacrifice profit with higher outlays of capital, as long as the ‘quality’ or ‘satisfaction’ as observed by the consumer does not suffer.

Anderson is presenting a dichotomy between what we might call the frugal option of using prioritization schemes to make more efficient use of the bandwidth we’ve got and the profligate option of simply building more capacity when we run out of the bandwidth we’ve got. The former is supposed to be the cheap, hard-headed, capitalist way of doing things, while the latter is the sort of thing that works fine in the lab, but is too wasteful to work in the real world.

Continue reading →

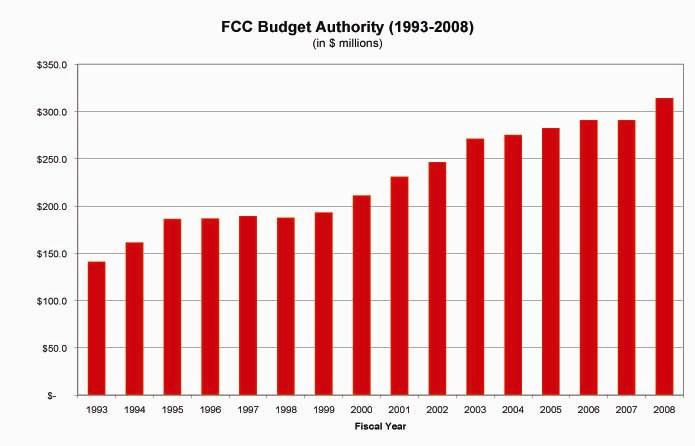

Time for a quick reality check. The Federal Communications Commission regulates older media sectors and communications technologies: broadcast radio, broadcast TV, telephones, satellites, etc. These sectors and technologies are growing increasingly competitive and face myriad new, unregulated rivals. What, then, is wrong with this picture?

Seriously, I just don’t get it. Why does the FCC’s budget keep growing without constraint? Why does it need $313 million and nearly 2,000 bureaucrats to regulate industries and technologies that could do just fine, thank you very much, without endless meddling from DC. It seems to me like all those unregulated rivals are doing just fine without the FCC serving as market nanny, so why not cut the flow of funds to the FCC for awhile and see what happens?

This agency needs to be put on a serious diet. There’s just no excuse for this level of spending in an era when the market is growing more competitive. Check out the entire FCC 2008 budget here if you are interested in their profligate spending habits.

(And just imagine how much more the agency will be spending once Net neutrality regulations get on the books!)

One of the most interesting things in Cory Doctorow’s article was a link to this network neutrality write-up from last year in Salon. I thought this was fascinating:

There is fractious division among network engineers on whether prioritizing certain time-sensitive traffic would actually improve network performance. Introducing intelligence into the Internet also introduces complexity, and that can reduce how well the network works. Indeed, one of the main reasons scientists first espoused the end-to-end principle is to make networks efficient; it seemed obvious that analyzing each packet that passes over the Internet would add some computational demands to the system.

Gary Bachula, vice president for external affairs of Internet2, a nonprofit project by universities and corporations to build an extremely fast and large network, argues that managing online traffic just doesn’t work very well. At the February Senate hearing, he testified that when Internet2 began setting up its large network, called Abilene, “our engineers started with the assumption that we should find technical ways of prioritizing certain kinds of bits, such as streaming video, or video conferencing, in order to assure that they arrive without delay. As it developed, though, all of our research and practical experience supported the conclusion that it was far more cost effective to simply provide more bandwidth. With enough bandwidth in the network, there is no congestion and video bits do not need preferential treatment.”

Continue reading →

I missed it when it came out last summer but Cory Doctorow has a good article on network neutrality. Good, but a little strange. He makes a lot of good points, but then he ends up at a conclusion that doesn’t seem to follow from the points he made earlier in the article. On the one hand, he rightly points out that the end-to-end principle has been crucial to the success of the Internet to date, and that there’s reason to worry about telcos screwing around with it. On the other hand, he gives some great examples of the challenges regulators are likely to face:

The rules are going to have to do three incredibly tricky things:

1. Define network neutrality. This is harder than it sounds. If a Bell lets Akamai put one of its mirror servers in a central office, then Akamai’s customers can get a better quality of service to the Bell’s customers than those using an Akamai competitor. This is arguably a violation of net neutrality, but how do you solve it? It’s probably not practical to require the Bells to let all comers put local caches on their premises; there’s only so much rack space, after all.

Another tricky case: the University that provides a DSL service to its near-to-campus housing and configures its network to deliver guaranteed throughput to a courseware archive. It gets even stickier if the DSL and/or the courseware archive are supplied by commercial third parties. Poorly written net neutrality regulations could prevent universities from providing those services, which should be allowed.

Continue reading →

OK, last post on the Herman paper. I’m especially pleased that he took the time to respond to my “regulatory capture” argument, because to my knowledge, he’s the first person to respond to the substance of my argument (Most of the criticism focused on my appearance and my nefarious plot to impersonate the inventor of the web):

Timothy B. Lee insists that BSPs will have more sway than any other group in hearings before the FCC and will therefore “turn the regulatory process to their advantage.” He draws from a vivid historical example of the Interstate Commerce Commission (“ICC”), founded in 1887. “After President Grover Cleveland appointed Thomas M. Cooley, a railroad ally, as its first chairman, the commission quickly fell under the control of the railroads, gradually transforming the American transportation industry into a cartel.” Yet this historic analogy, and its applicability to the network neutrality problem, is highly problematic. Even the ICC, the most cliché example of regulatory capture, was not necessarily a bad policy decision when compared with the alternative of allowing market abuses to continue unabated. One study concludes “that the legislation did not provide railroads with a cartel manager but was instead a compromise among many contending interests.” In contrast with Lee’s very simplistic story of capture by a single interest group, “a multiple-interest-group perspective is frequently necessary to understand the inception of regulation.”

Herman doesn’t go into any details, so it’s hard to know which “market abuses” he’s referring to specifically, but this doesn’t square with my reading on the issue. I looked at four books on the subject that ranged across the political spectrum, and what I found striking was that even the ICC’s defenders were remarkably lukewarm about it. The most pro-regulatory historians contended that the railroad industry needed to be regulated, but that the ICC was essentially toothless for the first 15 years of its existence. At the other extreme is Gabriel Kolko, whose 1965 book

Railroads and Regulation, 1877-1916 argues that the pre-ICC railroad industry was fiercely competitive, and that the ICC operated from the beginning as a way to prop up the cartelistic “pools” that had repeatedly collapsed before the ICC’s enactment. Probably the most balanced analyst, Theodore E Keeler, wrote in a Brookings Institute monograph that that in its early years, the ICC “had about it the quality of a government cartel.”

Continue reading →

I’m going to wrap up my series on Bill Herman’s paper by considering his response to counter-arguments against new regulations. I think he has the better of the argument on two of the criticisms (“network congestion” and “network diversity”), so I’ll leave those alone, but I disagree with his other two criticisms. Here’s the first, which he dubs the “better wait and see” argument:

Several opponents of network neutrality believe that the best approach is to wait and see. They are genuinely scared of broadband discrimination, but they would rather regulate after the situation has evolved further. The alleged disadvantage is that regulating now removes the chance to create better regulation later, and it accrues the unforeseen consequences described below. Felten provides a particularly visible and eloquent example of this argument. He agrees that neutrality is generally desirable as an engineering principle, but he wishes the threat of regulation could indefinitely continue to deter discrimination.

Unfortunately, the threat of regulation cannot indefinitely postpone the need for actual regulation. In the U.S. political system, most policy topics at most times will be of interest to a small number of policymakers, such as those on a relevant Congressional subcommittee or regulatory commission. This leads to periods of extended policy stability. Yet, as Baumgartner and Jones explain, this “stability is punctuated with periods of volatile change” in a given policy domain. One major source of change, they argue, is “an appeal by the disfavored side in a policy subsystem, or those excluded entirely from the arrangement, to broader political processes–Congress, the president, political parties, and public opinion.”

A key variable in the process is attention. Human attention serves as a bottleneck on policy action, and institutional constraints further tighten the bottleneck. Specialized venues such as the FCC will be able to follow most of the issues under their supervision with adequate attention, but most of the time the “broader political processes” pay no attention to those issues.

As I’ll explain below the fold, I think this dramatically underestimates the success that the pro-neutrality side has had in raising the profile of this issue.

Continue reading →

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.