Was yesterday’s groundbreaking deal between BitTorrent and Comcast on network management a triumph for regulation? That’s the storyline being widely peddled in the wake of yesterday’s announcement that the two would work together to solve network management problems.

At first, it looked like the announcement might signal an end to FCC involvement in the dispute.. That, at least, was the view of the sensible – but all too lonely – Commissioner Robert McDowell of the FCC. “[I]t is”, he said, “precisely this kind of private sector solution that has been the bedrock of Internet governance since its inception.”

Continue reading →

Comcast and BitTorrent are working together to improve the delivery of video files on Comcast’s broadband network.

Rather than slow traffic by certain types of applications — such as file-sharing software or companies like BitTorrent — Comcast will slow traffic for those users who consume the most bandwidth, said Comcast’s [Chief Technology Officer, Tony] Warner. Comcast hopes to be able to switch to a new policy based on this model as soon as the end of the year, he added. The company’s push to add additional data capacity to its network also will play a role, he said. Comcast will start with lab tests to determine if the model is feasible.

Over at Public Knowledge, Jef Pearlman

argues that the pioneering joint effort by Comcast and BitTorrent “changes nothing about the issues raised in petitions” before the FCC advocating more regulation, because Comcast and BitTorrent are “commercial entities whose goals are, in the end, to make sure that their networks and technology are as profitale as possible.”

Setting aside whether the pursuit of profit is a good thing or not, what this episode actually proves is that the Federal Communications Commission has done its job, the

threat of regulation is a credible deterrent to prevent unreasonable discrimination by broadband service providers and we don’t need a new regulatory framework with the unintended consequences which regulation always entails.

Continue reading →

Technology blogger Ike Elliott has a terrific series underway over at his blog this week looking at the differences between cable and telco-fiber infrastructures. He is “looking at why cable companies are kicking the tires on fiber-based passive optical networks, even though they have a heavy investment in hybrid fiber coax (HFC) networks.” The series is a good primer on these issues. Here are the entries in his series so far:

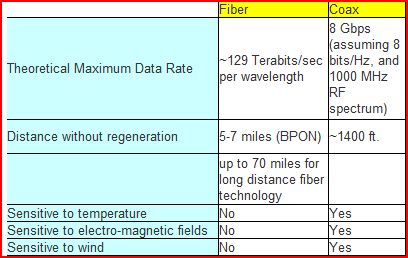

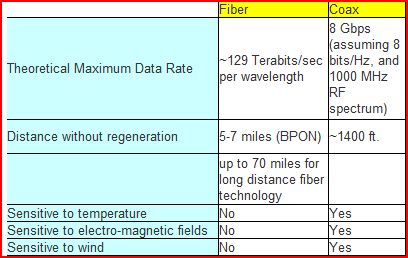

And here’s a handy table that Ike put together comparing the capabilities of fiber vs. coax:

I want to associate myself with Adam’s excellent comments about Jonathan Zittrain’s book. I haven’t read the book yet, so I won’t try to comment on the specifics of Zittrain’s argument, but it strikes me that if Adam is summarizing the book fairly, ZIttrain’s thesis is strikingly similar to the thesis of Larry Lessig’s Code: The open Internet is great, but if we don’t take action soon it will turn into a bad, proprietary, corporatized network. I’ve been mildly surprised at how little comment there’s been on how spectacularly wrong Lessig’s specific predictions in Code turned out to be. Lessig was absolutely convinced that a system of robust user authentication would put an end to the Internet’s free-wheeling, decentralized nature. Not only has that not happened, but I suspect that few would seriously defend Lessig’s specific prediction will come to pass.

But while Lessig’s specific prediction turned out to be wrong, the general thrust of his argument—that open systems are unstable and will implode unless managed just right—is alive and well. I think that basic claim is still wrong. And I think it’s not a coincidence that these kinds of critiques often come from the left-hand side of the political spectrum (I don’t actually know Zittrain’s politics, but Lessig is certainly a leftie). It seems to me that left-of-center techies are in a bit of an awkward position because on the one hand they’ve fallen in love with the open, decentralized architecture that is epitomized by the Internet, but are predisposed to criticize the open, decentralized economic system called the free market. As a result, they wind up taking the somewhat incongruous stance that to preserve the decentralized nature of our technological systems, we need to have more centralization of our economic and political system. Zittrain’s choice of the Manhattan Project as a metaphor for the way to preserve the Internet’s openness is particularly striking, because of course the Manhattan Project was the absolute antithesis of the philosophy behind TCP/IP. It was a hierarchical, secret, centrally planned effort that left no room for dissent, diversity or public scrutiny.

Continue reading →

Is increasing use of video likely to cause Internet delays? The New York Times today floats the theory that it might be.

But at least the article generously quotes a leading skeptic: Andrew Odlyzko, a Professor of Mathematics and Director of the Digital Technology Center and the Minnesota Supercomputing Institute at the University of Minnesota.

[Odlyzko] estimates that digital traffic on the global network is growing about 50 percent a year, in line with a recent analysis by Cisco Systems, the big network equipment maker.

That sounds like a daunting rate of growth. Yet the technology for handling Internet traffic is advancing at an impressive pace as well. The router computers for relaying data get faster, fiber optic transmission gets better and software for juggling data packets gets smarter.

“The 50 percent growth is high. It’s huge, but it basically corresponds to the improvements that technology is giving us,” said Professor Odlyzko, a former AT&T Labs researcher. Demand is not likely to overwhelm the Internet, he said.

Odlyzko will be in Arlington, Va., next Tuesday, March 18, giving a “Big Ideas About Information” lecture at the Information Economy Project at the George Mason University School of Law.

Back in 1999, when everyone was saying that the Internet was doubling every three months, or 1500 percent

annual growth, Odlyzko was the voice of reason: the Internet was only growing at 100 percent per year.

In his “Big Ideas about Information” lecture next Tuesday, Professor Odlyzko will compare the Internet bubble of the turn of the century with the British Railway Mania of the 1840s, the greatest technology mania in history – up until the Dot.com bubble. In both cases, clear evidence indicated that financial instruments would crash.

The event, at 4 p.m., is the latest in a series sponsored by IEP, which is directed by Professor Tom Hazlett. (I serve as Assistant Director of the project.) Can’t make it to Arlington, Va., for the “Big Ideas” lecture? Join us remotely, by Webcast, or over the phone, at TalkShoe.

Well I think many of us here can appreciate Lawrence Lessig’s call to “blow up the FCC,” as he suggested in an interview with National Review this week. But I wonder, who, then, would be left to enforce his beloved net neutrality mandates and the media ownership rules he favors? He’s advocated regulation on both those fronts, but it ain’t gunna happen without some bureaucrats around to fill out the details and enforce all the red tape.

Regardless, I whole-hearted endorse his call for sweeping change. Here’s what he told

National Review:

One of the biggest targets of reform that we should be thinking about is how to blow up the FCC. The FCC was set up to protect business and to protect the dominant industries of communication at the time, and its history has been a history of protectionism — protecting the dominant industry against new forms of competition—and it continues to have that effect today. It becomes a sort of short circuit for lobbyists; you only have to convince a small number of commissioners, as opposed to convincing all of Congress. So I think there are a lot of places we have to think about radically changing the scope and footprint of government.

Amen, brother. If he’s serious about this call, then I encourage Prof. Lessig to check out the “Digital Age Communications Act” project that over 50 respected, bipartisan economists and legal scholars penned together to start moving us down this path.

The rural broadband debate has been in the news a lot lately. Yesterday, DSL Reports ran a story sharply criticizing a report released by the US Internet Industry Association (an ISP lobbyist firm). But as Ars pointed out, the report actually offers some facts revealing that broadband availability in the U.S. isn’t nearly as bad some have suggested.

79 % of homes with a phone line can now get DSL, and 96 % of homes with cable can get broadband. Considering just about every home has a phone line, and most people have cable, these numbers suggest the main reason for the lack of rural broadband users isn’t the lack of availability, but the lack of adoption. Of course, rural areas have slower speeds and higher prices than urban areas. This makes sense, because building out a network in low-density areas costs more per subscriber versus urban areas, where a single apartment complex can house hundreds of users.

Still, groups argue that massive government subsidies are needed to promote broadband deployment in rural areas. ConnectedNation (a Washington-based non-profit) released a report a couple weeks ago, “The Economic Impact of Stimulating Broadband Nationally”, which concluded that accelerating broadband could pump $134 billion into the U.S. economy.

Continue reading →

Mari Silbey of MediaExperiences2Go has an interesting post about “The Changing Face of Concurrency.” She examines the various metrics companies and analysts use to study bandwidth flows or to model network activity. These include households passed, penetration ratio, concurrency ratio, and bandwidth. Concurrency represents the number of subscribers likely to be tuned in or logged on at any given time, which is obviously important for cable bandwidth measures or estimated since it is a shared network. It’s not enough to simply be examining penetration ratios or aggregate bandwidth measures when debating network management policies. Concurrency ratios give us a better way to measure what is possible on existing cable infrastructure.

More broadly speaking, the reason all this is important is because we need to have a common set of metrics when evaluating issues that come up in Net neutrality debates since opponents often use different terms and measures when discussing these issues. Anyway, just thought I would highlight her article for that reason.

An inconvenient fact (for opponents of network management):

A survey by the Japan Internet Providers Association shows 40% of Japanese ISPs perform network management, according to Yomiuri Shimbun, and the trend is growing.

Of the 276 respondents, 69 companies said they restricted information flow through their lines. A total of 106 companies, including those that rent lines from infrastructure owners, impose such restrictions. Twenty-nine companies said they were planning to take similar measures.

I’ve finally finished a draft of the network neutrality paper I’ve been blogging about for the last few months. One of the things I learned after the publication of my previous Cato paper is that, especially when you’re writing about a technical subject, you’ll inevitably make some errors that will only be caught when a non-trivial number of other people have the chance to read it. So if any TLF readers are willing to review a pre-release draft of the paper and give me their comments, I would appreciate the feedback. Please email me at leex1008@umn.edu and I’ll send you a copy. Thanks!

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.