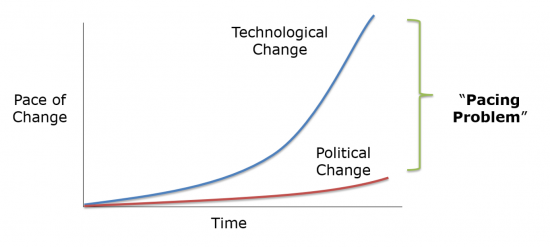

I recently posted an essay over at The Bridge about “The Pacing Problem and the Future of Technology Regulation.” In it, I explain why the pacing problem—the notion that technological innovation is increasingly outpacing the ability of laws and regulations to keep up—“is becoming the great equalizer in debates over technological governance because it forces governments to rethink their approach to the regulation of many sectors and technologies.”

In this follow-up article, I wanted to expand upon some of the themes developed in that essay and discuss how they relate to two other important concepts: the “Collingridge Dilemma” and technological determinism. In doing so, I will build on material that is included in a forthcoming law review article I have co-authored with Jennifer Skees, Ryan Hagemann (“Soft Law for Hard Problems: The Governance of Emerging Technologies in an Uncertain Future”) as well as a book I am finishing up on the growth of “evasive entrepreneurialism” and “technological civil disobedience.”

Recapping the Nature of the Pacing Problem

First, let us quickly recap that nature of “the pacing problem.” I believe Larry Downes did the best job explaining the “problem” in his 2009 book on The Laws of Disruption. Downes argued that “technology changes exponentially, but social, economic, and legal systems change incrementally” and that this “law” was becoming “a simple but unavoidable principle of modern life.”

Downes was generally a cheerleader for such developments. For him, the pacing problem is more like the pacing benefit. But Downes is in the minority among most tech policy scholars in this regard. In the field of Science and Technology Studies (STS), discussions about the pacing problem and what to do about it are omnipresent and full of foreboding gloominess.

STS is a broad field of interdisciplinary studies unified by a concern with “the impacts and control of science and technology, with particular focus on the risks, benefits and opportunities that S&T may pose” to a wide range of values. STS studies incorporates many disciplines: legal and philosophical studies, sociology, anthropology, engineering, and others. In countless essays, papers, journal articles, and books, STS scholars lament the pacing problem and often insist something must be done, often without ever getting around to explaining what that something is.

Regardless of their field of study, there is broad recognition among these scholars that new technological, social, and political realities make the pacing problem a phenomenon worth studying. In my Bridge essay, I identified three primary drivers of the pacing problem:

- Technological driver: The power of “combinatorial innovation,” which is driven by “Moore’s Law,” fuels a constant expansion of technological capabilities.

- Social driver: As citizens quickly assimilate new tools into their daily lives and then expect that even more and better tools will be delivered tomorrow.

- Political driver: Government has grown increasingly dysfunctional and unable to adapt to those technological and social changes.

The “Collingridge Dilemma”

Although they do not always refer to it by name, STS scholars regularly stress the so-called “Collingridge dilemma” in their work. The Collingridge dilemma refers to the extreme difficulty of putting proverbial genies back in their bottles once a given technology has reached a certain inflection point in society. The concept is named after David Collingridge, who wrote about the challenges of governing emerging technologies in his 1980 book,

The Social Control of Technology

.

“The social consequences of a technology cannot be predicted early in the life of the technology,” Collingridge argued. “By the time undesirable consequences are discovered, however, the technology is often so much part of the whole economics and social fabric that its control is extremely difficult.” He called this the “dilemma of control,” and asserted that, “When change is easy, the need for it cannot be foreseen; when the need for change is apparent, change has become expensive, difficult and time-consuming.”

In a sense, the “Collingridge dilemma” is simply a restatement of the pacing problem but with (1) greater stress on the social drivers behind the pacing problem and, (2) an implicit solution to “the problem” in the form of preemptive control of new technologies while they are still young and more manageable.

Specifically, for many STS scholars, Collingridge’s “dilemma” is preferably solved through the application of the Precautionary Principle. The contours of the Precautionary Principle are notoriously murky and ill-defined. Nonetheless, as I discussed a great length in my last book on the subject, the Precautionary Principle generally refers to the belief that new innovations should be curtailed or disallowed until their developers can prove that they will not cause any harm to individuals, groups, specific entities, cultural norms, or various existing laws, norms, or traditions.

You can see the logic of the Collingridge dilemma and the Precautionary Principle at work everywhere in STS scholarship today. Few scholars want to admit they favor the Precautionary Principle, however, so they often use different terminology. “Anticipatory governance” or “upstream governance” are the preferred terms of art these days.

For example, in a recent law review article about “Regulating Disruptive Innovation,” Nathan Cortez argues that “new technologies can benefit from decisive, well-timed regulation” or even “early regulatory interventions.” Similarly, writing in Slate in 2014, John Frank Weaver insisted we should regulate emerging tech like artificial intelligence “early and often” to “get out ahead of” various social and economic concerns.

In his last book, A Dangerous Master: How to Keep Technology from Slipping beyond Our Control, bioethicist Wendell Wallach also argued for new forms of upstream governance and defined it as a system that allow for “more control over the way that potentially harmful technologies are developed or introduced into the larger society. Upstream management is certainly better than introducing regulations downstream, after a technology is deeply entrenched, or something major has already gone wrong,” he argued. Wallach is basically just restating the Collingridge dilemma in this regard.

The problem with all these calls for the anticipatory or upstream governance solutions to the pacing problem and the Collingridge dilemma is that, like the Precautionary Principle more generally, the specific solutions are very incoherent or sometimes completely lacking. STS scholars almost always leave the reader hanging without offering a conclusion to their gloomy, pessimistic narratives about whatever technology or technological process it is they are critiquing. Critics are quick to issue bold calls-to-action, but rarely provide a detailed blueprint.

There are some exceptions. Some STS scholars have advocated for Precautionary Principle-minded legislation or agencies, like an “Artificial Intelligence Development Act,” a “National Algorithmic Technology Safety Administration” or a federal AI agency, such as a “Federal Robotics Commission.” Meanwhile, over the past decade, many STS scholars have pushed for national privacy and cybersecurity legislation, or expansive new forms of liability for technology companies. The regulatory authority sought in these cases would be squarely precautionary in character, aimed at addressing a wide array of hypothetical harms through permissioned-based rulemaking before those problems even materialize.

Technological Determinism?

Discussions about the pacing problem and the Collingridge dilemma have an air of technological determinism to them. Technological determinism generally refers to the notion that technology almost has a mind of its own and that it will plow forward without much resistance from society or governments. Here is a more scholarly definition from Sally Wyatt, who has explained how technological determinism is generally defined in a two-part fashion:

The first part is that technological developments take place outside society, independently of social, economic, and political forces. New or improved products or ways of making things arise from the activities of inventors, engineers, and designers following an internal, technical logic that has nothing to do with social relationships. The more crucial second part is that technological change causes or determines social change.

The opposite of technological determinism is usually referred to as “social constructivism,” which as Thomas Hughes notes, “presumes that social and cultural forces determine technical change.”

Ironically, among STS scholars, technological determinist reasoning is both (a) regularly on display, and (b) generally reviled. That is, many STS scholars speaking in deterministic tones about the inevitability of certain technological developments, but then they effortlessly shift into social constructivist mode when commenting on what they hope to do about it.

One of the most well-known technology critics of the past century was French philosopher Jacques Ellul. It is impossible to read his tracts and not find deterministic reasoning flying off every other page. He argued, for example, that technology is “self-perpetuating, all-persuasive, and inescapable,” and that it represents “an autonomous and uncontrollable force that dehumanized all that it touches.” Moreover, within the field of Marxist studies, technological determinism is ubiquitous. Of course, that goes back to Marx himself and his many ideological descendants, who held strongly deterministic views about the role industrial technology played in sharping history and socio-political systems. Plenty of other STS scholars remain hard-core social constructivist, however, and insist that dealing with the pacing problem and the Collingridge dilemma really just comes down to a matter of sheer social and political willpower.

Techno-determinist thinking is usually on display in more vivid terms among technological optimists. Reading the writings of futurists like Ray Kurzweil and Kevin Kelly, one cannot help but get the sense that they are pining for the day when we are all just assimilated into The Matrix. There is an air of utter futility associated with humanity’s efforts to resist the spread of various technological systems and processes. Philosopher Michael Sacasas refers to this mentality as “the Borg Complex,” which, he says, is often “exhibited by writers and pundits who explicitly assert or implicitly assume that resistance to technology is futile.”

The point I am trying to make here is that technological determinism is at work in all sorts of scholarship and punditry. Regardless of whether one subscribes to what Ian Barbour has labelled the warring viewpoints of “Technology as Liberator” or “Technology as a Threat,” very different people can hold strongly deterministic viewpoints.

Soft Determinism

The problem with all this talk about determinism—technological, social, political, or whatever—is that the lines are never quite as bright as some suggest. “Hard” determinism of any of these varieties simply cannot be correct. We have too many historical examples that run counter to both narratives.

Personally, I’ve always subscribed to what some refer to as “ soft technological determinism.” Technological historian Merritt Roe Smith defines “soft determinism” as the view “which holds that technological change drives social change but at the same time responds discriminatingly to social pressures,” as compared to “hard determinism,” which “perceives technological development as an autonomous force, completely independent of social constraints.”

Konstantinos Stylianou has offered a variant of soft determinism that zeroes in on better understanding the unique attributes of specific technologies and political systems when considering how difficult they may be to control. He argues that “there are indeed technologies so disruptive that by their very nature they cause a certain change regardless of other factors,” such as the Internet. Stylianou concludes that:

It seems reasonable to infer that the thrust behind technological progress is so powerful that it is almost impossible for traditional legislation to catch up. While designing flexible rules may be of help, it also appears that technology has already advanced to the degree that is able to bypass or manipulate legislation. As a result, the cat-and-mouse chase game between the law and technology will probably always tip in favor of technology. It may thus be a wise choice for the law to stop underestimating the dynamics of technology, and instead adapt to embrace it.

That may sound like just more hard deterministic thinking, but it represents a softer variety that holds that the special characteristics of some technologies are indeed altering our capacity to govern many newer sectors using traditional regulatory mechanisms. In my new law review article with Jennifer Skees and Ryan Hagemann, we conclude that this is the key factor motivating the gradual move away from “hard law” and toward “soft law” governance tools for a great many emerging technologies.

To be clear, this does not mean we are going to soon reach the proverbial “end of politics” or the “death of the nation-state” due to technology, or anything like that. As I point out in my forthcoming book, that sort of talk is silly. Some technology enthusiasts or libertarians use techno-determinist talk as if they are preaching a gospel of liberation theology—liberation from the state through technology emancipation, that is.

In reality, technology giveth and technology taketh away. Technology can empower people and institutions and help them challenge laws, regulations, and entire political systems. My forthcoming book documents how many “evasive entrepreneurs” are doing just that today, and with increasing regularity. But technology empowers government actors, too. In an unpublished 2009 manuscript entitled, “Does Technology Drive the Growth of Government?” my Mercatus Center colleague Tyler Cowen noted how growth of big government in the 20th century was greatly facilitated by various modern technologies (advanced transportation and communications networks, in particular). “Future technologies may either increase or decrease the role of government in society,” he noted, “but if history shows one thing, it is that we should not neglect technology in understanding the shift from an old political equilibrium to a new one.”

Thus, those who think that the pacing problem is a one-way ratchet to emancipation from state control need to realize that technology can be used for good and bad ends, and it can be used (and abused) by governments to expand their powers and limit our liberties. Similarly, those tech critics and STS scholars who lament how the pacing problem will undermine governments, democracy, or other institutions or values without radical interventions also are going too far. They need to recognize that while it is true many new technologies will march forward at a steady clip, it does not mean that society is powerless to bring some order to technological processes. We shape our tools and then our tools shape us. And then we create still more tools to improve upon previous tools, and the process goes on and on.

John Seely Brown and Paul Duguid put it best in this 2001 essay responding to “doom-and-gloom technofuturists”:

[T]echnological and social systems shape each other. The same is true on a larger scale. . . . Technology and society are constantly forming and reforming new dynamic equilibriums with far-reaching implications. The challenge . . . is to see beyond the hype and past the over-simplifications to the full import of these new sociotechnical formations.

So yes, the pacing problem is real, and it will continue to raise problems for social and political systems. But as Brown and Paul Duguid suggest, we’ll constantly adapt, form and reform new dynamic equilibriums, and then “muddle through,” just as we have so many times before.

Related Reading

- “The Pacing Problem and the Future of Technology Regulation”

- “Muddling Through: How We Learn to Cope with Technological Change”

- “A Short Response to Michael Sacasas on Advice for Tech Writers”

- “Are “Permissionless Innovation” and “Responsible Innovation” Compatible?”

- “Wendell Wallach on the Challenge of Engineering Better Technology Ethics”

- “Book Review: Calestous Juma’s “Innovation and Its Enemies’”

- “Evasive Entrepreneurialism and Technological Civil Disobedience: Basic Definitions”

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.