I participated last week in a Techdirt webinar titled, “What IT needs to know about Law.” (You can read Dennis Yang’s summary here, or follow his link to watch the full one-hour discussion. Free registration required.)

The key message of The Laws of Disruption is that IT and other executives need to know a great deal about law—and more all the time. And Techdirt does an admirable job of reporting the latest breakdowns between innovation and regulation on a daily basis. So I was happy to participate.

Legally-Defensible Security

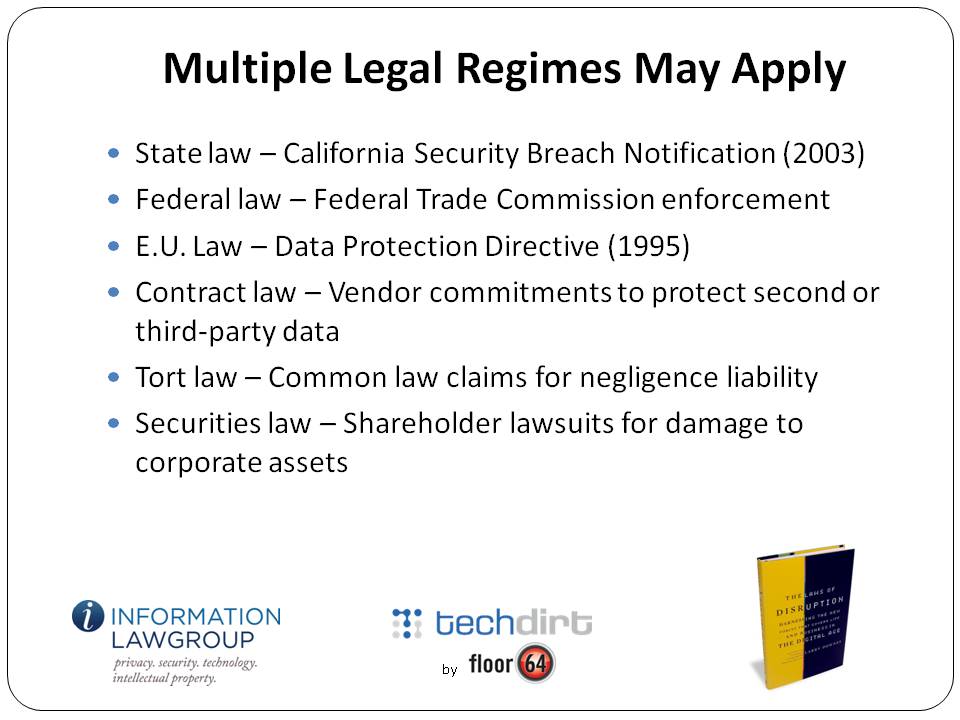

Not surprisingly, there were far too many topics to cover in a single seminar, so we decided to focus narrowly on just one: potential legal liability when data security is breached, whether through negligence (lost laptop) or the criminal act of a third party (hacking attacks). We were fortunate to have as the main presenter David Navetta, founding partner with The Information Law Group, who had recently written an excellent article on what he calls “legally-defensible security” practices.

I started the seminar off with some context, pointing out that one of the biggest surprises for companies in the Internet age is the discovery that having posted a website on the World Wide Web, they are suddenly and often inappropriately subject to the laws and jurisdiction of governments around the world. (How wide is the web? World.)

In the case of security breaches, for example, a company may be required to disclose the incident to affected third parties (customers, employees, etc.) under state law. At the other extreme, executives of the company handling the data may be criminally-liable if the breach involved personally-identifiable information of citizens of the European Union (e.g., the infamous Google Video case in Italy earlier this year, which is pending appeal). Individuals and companies affected by a breach may sue the company under a variety of common law claims, including breach of contract (perhaps the violation of a stated privacy policy) or simple negligence.

The move to cloud computing amplifies and accelerates the potential nightmares. In the cloud model, data and processing are subcontracted over the network to a potentially-wide array of providers who offer economies of scale, application or functional expertise, scalable hardware or proprietary software. Data is everywhere, and its disclosure can occur in an exploding number of inadvertent ways. If a security breach occurs in the course of any given transaction, just untangling which parties handled the data—let alone who let it slip out—could be a logistical (and litigation) nightmare.

The Limits of Negligence

Not all security breaches involve private or personal information, but it’s not surprising that the most notable breakdowns (or at least the most vividly-reported) in security are those that expose consumer or citizen data, sometimes for millions of affected parties. (Some of the most egregious losses have involved government computers left unsecured, with sensitive citizen data unencrypted on the hard drive.) Consumer computing activity has surpassed corporate computing and is growing much faster. Privacy and security are topics that are increasingly hard to disentangle

Which is not to say that the bungling of data that affects millions of users necessarily translates to legal consequences for the company who held the information. Often, under current law, even the most irresponsible behavior by a data handler does not necessarily translate to liability.

For one thing, U.S. law does not require companies to spare no expense in protecting data. As David Navetta points out, courts may find that despite a breach the precautions taken may have nonetheless been economically sensible, meaning that the precautions taken were justified given the likelihood of a breach and the potential consequences that followed. Adherence to ISO or other industry standards on data security may be sufficient to insulate a company from liability—though not always. (Courts sometimes find that industry standards are too lax.)

For the most part, tort law still follows the classic negligence formula of the beatified American jurist Learned Hand, who explained that the duty of courts was to encourage behavior by defendants that made economic sense. If courts found liability any time a breach occurred, then data handlers would be incentivized to spend inefficient amounts of money on protecting it, leading to net social loss. (The classic cases involved sparks from locomotives causing fire damage to crops—perfect avoidance of damage, the courts ruled, would cost too much relative to the harm caused and the probability of it occurring.)

That, at least, is the common law regime that applies in the U.S. The E.U., under laws enacted in support of its 1995 Privacy Directive, follow a different rule, one that comes closer to product liability law, where any failure leads to per se liability for the manufacturer, or indeed for any company in the chain of sales to a consumer.

A case last week from the Ninth Circuit Court of Appeals, however, reminds us that a finding of liability doesn’t necessarily lead to an award of damages. In Ruiz v. Gap, a job applicant whose personal information was lost when two laptop computers were stolen from a Gap vendor who was processing applications sued Gap, claiming to represent a class of applicants who were victims of the loss.

All of Ruiz’s claims, however, were rejected. Affirming the lower court and agreeing with most other courts to consider the issue, the Ninth Circuit held that Ruiz could not sue Gap without a showing of “appreciable and actual damage.” The cost of forward-looking credit monitoring didn’t count (Gap offered to pay Ruiz for that in any case), nor did speculative claims of future losses. Actual losses, expressible and provable in monetary terms, were required.

The court also rejected claims under California state law and the state constitution, noting that an “invasion of privacy” does not occur until there is actual misuse of the data contained on the stolen laptops. (Most laptop thefts are presumably motivated by the value of the hardware, not any data that might reside on the hard drive.)

As Eric Goldman succinctly points out, the Ninth Circuit case highlights some odd behavior by plaintiff class action lawyers in the recent hubbub involving Facebook, Google, and other companies who either change their privacy policies or who use customer data in ways that arguably violate that policy. “[T]he most disturbing thing,” Eric writes, “is that so many plaintiffs’ lawyers seem completely uninterested in pleading how their clients suffered any consequence (negative or otherwise) from the gaffe at all. Their approach appears to be that the service provider broke a privacy promise, res ipsa loquitur, now write us a check containing a lot of zeros.”

A Surprising Lack of Law – And an Alternative Model for Redress

It’s not just the lawyers who are confused here. U.S. consumers, riled up by stories in mainstream media, seem to live under the misapprehensions that they have some legal right to privacy, or that the protection of personal information that can be enforced in courts against corporations.

That is true in the E.U., but not in the U.S. The Constitutional “right to privacy” detailed in U.S. Supreme Court decisions of the last fifty years only applies to protections against government behavior. There is no Constitutional right to privacy that can be enforced against employers, business partners, corporations, parents, or anyone else.

What about statutes? With a few specific exceptions for medical information, credit history, and a few other categories, there is also no U.S. or for the most part state law that protects consumer privacy against corporations. There’s no law that requires a website to publish its privacy policy, let alone follow it. Even if policy constitutes an enforceable contract (not entirely a settled matter of law), the Ruiz case reminds us that breach of contract is irrelevant without evidence of actual monetary damages.

Before storming the barricades demanding justice, however, keep in mind that the law is not the only source of a remedy. (Indeed, law is rarely the most efficient or effective in any case.)

The lack of a legal remedy for misuse of private information doesn’t mean that companies can do whatever they like with data they collect, or need take no precautions to ensure that information isn’t lost or stolen.

As more and more personal and even intimate data migrates to the cloud, it has become crystal-clear that consumers are increasingly sensitive (perhaps, economically-speaking, over-sensitive) about what happens to it. Consumers express their unhappiness in a variety of media, including social networking sites, blogs, emails, and tweets. They can and do put economic pressure on companies whose behavior they find unacceptable: boycotts, switching to other providers, and through activism that damages the brand of the miscreant.

Even if the law offers no remedy, in other words, the court of public opinion has proven quite effective. Even without a court ordering them to do so, some of the largest data handlers have made drastic changes to their policies, software, and how they communicate with users.

Looming in the background of these stories is always the possibility that if companies fail to appease their customers, the customers will lobby their elected representatives to provide the kind of legal protections that so far haven’t proven necessary. But given the mismatch between the pace of innovation and the pace of legal change, legislation should always be the last, not the first, resort.

So expect lots more stories about security breaches, and expect most of them to involve the potential disclosure of personal information. (That’s one reason that laws requiring disclosure of breaches are a good idea. Consumers can’t flex their power if they are kept in the dark about behavior they are likely to object to.)

And that means, as we conclude in the seminar, that IT executives making security decisions had better start talking to their counterparts in the general counsel’s office.

Because as hard as it is for those two groups to talk to each other, it’s much harder to have a conversation after a breach than before. IT makes decisions that affect the legal position of the company; lawyers make decisions that affect the technical architecture of products and services. The question isn’t whether to formulate a legally-defensible security policy, in other words, but when.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.