Noam Cohen has a great piece in The New York Times today, In Allowing Ad Blockers, a Test for Google, explaining how Google’s decision about allowing ad blocking extensions for the new beta version of its Chrome browser puts Google’s much-ballyhooed talk about openness to the test. So far, Google is passing, with two such extensions (AdThwart & AdBlock) available in Chrome’s extensions gallery. With a combined 130,000+ users, these tools seem destined to be as popular among chrome users as AdBlock Plus has been among Firefox users: nearly 67,000,000 downloads and, according to the Times piece, 7+million active users.

Google has taken a sanguine attitude towards the issue, perhaps because as Adblock Plus creator Wladimir Palant notes, “Ad blockers are still used by a tiny proportion of the Internet population, and these aren’t the kind of people susceptible to ads anyway.” (In other words, the users most likely to download an ad blocking extensions, are likely to be more “ad-blind” and less likely to click on ads anyway.) Google’s director of engineering has noted how cautiously Google weighed its decision to allow ad-blocking programs “because Google makes all of its money from advertising.” But in the end, as the Times notes:

[H]e explained that the prevailing thinking was that “it’s unlikely ad blockers are going to get to the level where they imperil the advertising market, because if advertising is so annoying that a large segment of the population wants to block it, then advertising should get less annoying.”

“So I think the market will sort this out,” he said. “At least that is the bet we made when we opened the extension gallery and didn’t have any policy against ad-blockers.”

Michael Gundlach, creator of the AdBlock extension for Chrome (no direct connection to Palant’s AdBlock plus for Firefox, despite the similar names),

who once worked for Google in Ireland helping to ensure that ads kept appearing on Web sites, says he does not fear for media companies that increasingly rely on online ad revenue. Sounding like a firm believer of Mr. Rosenberg’s embrace-the-chaos manifesto, Mr. Gundlach said a brighter day would emerge from the challenge of ad blockers.

Extensions like his, he said, will make “every one else change their ways, to make ads more useful. Everyone wins, that’s competition. The ideal result would be to retire this extension because the entire Web was covered with ads that people loved and no one wanted to block them.”

What is this but a call for more relevant and less annoying web ads? Ironically, of course, personalized advertising is currently under attack on all fronts, and some experts have asserted that users don’t really want ads tailored to their interests—or, for that matter, news or discounts, either—on the basis of highly questionable opinion polls, as I’ve described. Continue reading →

One of the themes you come across again and again in public policy debates about privacy, advertising, marketing, or even free speech battles, is the notion that the public at large is made up of mindless sheep being duped at every turn. And, as Berin Szoka and I noted in our paper “What Unites Advocates of Speech Controls & Privacy Regulation?” if you buy into the argument that consumers are basically that stupid then it logically follows that people cannot be trusted or left to their own devices. Thus, government must intervene and establish a baseline “community standard” on behalf of the entire citizenry to tell them what’s best for them.

One of the themes you come across again and again in public policy debates about privacy, advertising, marketing, or even free speech battles, is the notion that the public at large is made up of mindless sheep being duped at every turn. And, as Berin Szoka and I noted in our paper “What Unites Advocates of Speech Controls & Privacy Regulation?” if you buy into the argument that consumers are basically that stupid then it logically follows that people cannot be trusted or left to their own devices. Thus, government must intervene and establish a baseline “community standard” on behalf of the entire citizenry to tell them what’s best for them.

But there are good reasons to question the premise that consumers are blind to efforts to persuade or influence them — regardless of what type of media content or communications efforts we are talking about. I was recently reading Communication Power by Manuel Castells and liked what he had to say about how so many media critics make this false assumption. Castells rightly notes:

Interestingly enough, critical theorists of communication often espouse [a] one-sided view of the communications process. By assuming the notion of a helpless audience manipulated by corporate media, they place the source of social alienation in the realm of consumerist mass communication. And yet, a well-established stream of research, particularly in the psychology of communications, shows the capacity of people to modify the signified of the messages they receive by interpreting them according to their own cultural frames, and by mixing the messages from one particular source with their variegated range of communicative practices. (p. 127)

That’s exactly right, and it is even more true in an age of ubiquitous, interactive communications technologies. “The people formerly known as the audience” have the unprecedented ability to talk back, to compare notes, to collectively criticize and hold accountable those who previously held all the cards in the mass media age of the past. Most consumers are perfectly capable of judging the merits of advertising, commercial messages, or other content on their own; they cast a skeptical eye toward most claims but process those claims alongside other counter-claims, independent judgments, informational inputs, and “cultural frames,” as Castells rightly argues. We need to give the public some credit.

If you don’t like sharing information about your interests with content publishers so they can sell advertisers a chance to win your attention, your remedy is closing your browser. It’s that simple.

But writer Kevin Kelleher has an economically challenged piece on WashingtonPost.com suggesting that Internet users should try to charge content providers money.

He says users should email web companies the following terms: “By collecting, storing, selling, trading, reselling or exploiting for any commercial purposes any information about me, your site agrees to pay me a licensing fee of $100 per month.”

That’s a non-starter from the get-go because users might be worth $10 per year, depending on the company. Negotiating a deal where your use is actually tracked, a price is negotiated, and a payment is securely made would be

more privacy invasive than the current state of affairs.

And that model has already been tried. It was called AllAdvantage.com. If ad rates rise again, an “infomediary” might be viable again, but we won’t get there with a silly “campaign” to undo the interest-data-for-content deal.

If you don’t like it, you can just close your browser, or pick carefully among the services that don’t use advertising (like Twitter, so far). That’s a perfectly acceptable choice, and life can be lived well without free Internet-based content.

So, go ahead! Live your values! Walk your talk! Close your browser.

In a classic example of the 5:00 Friday news drop, the Department of Homeland Security has announced that it is extending the REAL ID compliance deadline. Forty-six of 56 jurisdictions, it reports, were not able to implement even the interim measures it proposed requiring by December 31st when it last extended the deadline in May of 2008.

The DHS statement insists that a full compliance deadline on May 10, 2011 remains in effect. What that really means is that there will be another false crisis as that deadline approaches, and the DHS will extend the deadline yet again.

The better alternative is to repeal the national ID law and the worthless, expensive pseudo-security it represents. It is not to revive REAL ID under its alternative name “PASS ID.”

In case you live under a digital rock (whaddyamean, you don’t check TechMeme hourly?), you have probably heard that EPIC filed a complaint with the Federal Trade Commission Thursday, alleging that Facebook’s revised privacy settings (and their implementation) constitute “unfair and deceptive trade practices” punishable under the FTC’s Section 5 statutory consumer protection authority. Specifically, EPIC demands, in addition to “whatever other relief the Commission finds necessary and appropriate,” that the FTC “compel Facebook to restore its previous privacy settings allowing users to:”

- “choose whether to publicly disclose personal information, including name, current city, and friends” and

- “fully opt out of revealing information to third-party developers”

In addition, EPIC wants the FTC to “Compel Facebook to make its data collection practices clearer and more comprehensible and to give Facebook users meaningful control over personal information provided by Facebook to advertisers and developers.”

I’ll have more to say about this very complicated issue in the days to come, but I wanted to share, and elaborate on, two press hits I got on this issue today. First, in the PC World story, I noted that “we’re already seeing the marketplace pressures that Facebook faces move us toward a better balance between the benefits of sharing and granular control” and expressed my concern “about the idea that the government would be in the driver’s seat about these issues.” In particular, Facebook has made it easier for users to turn off the setting that includes their friends among their “publicly available information” that can be accessed on their profile by non-friends (unless the user opts to make their profile inaccessible through Facebook search and outside search engines).

In other words, this is an evolving process and Facebook faces enormous pressure to strike the right balance between openness/sharing and closedness/privacy. While Facebook’s critics assume that it is simply placing its owyou and I saw an article that is as oldyou you as it isn financial interests above the interests of its users, the reality is more complicated: Facebook’s greatest asset lies not in the sheer number of its users and not just in the information they share, but in the total degree of engagement in the site. The more time users spend on the site, the better, because Facebook is rewarded by advertisers for attracting and keeping the attention of users. Continue reading →

The headline strikes fear: “House Takes Steps to Boost Cybersecurity,” says the Washington Post.

What boondoggle are they embarking on now?

Cybersecurity is hundreds of different problems that should be handled by thousands of different actors. The federal government is in no position to “fix” cybersecurity, as I testified in the House Science Committee earlier this year.

But this is a good news story. Realizing that its own cybersecurity practices are not up to snuff, the House of Representatives will be ramping up training for its staff.

Better awareness of the ins and outs of securing computers, data, and networks will disincline Congress to undertake a rash, sweeping “overhaul” of the systems and incentives that produce and advance cybersecurity.

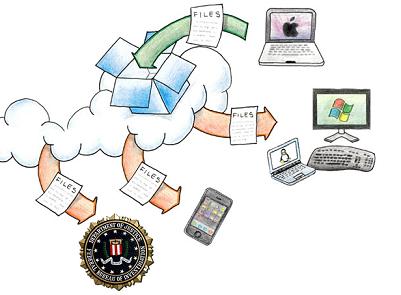

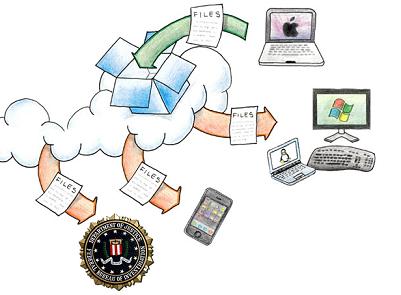

A colleague apparently suggested that the nice people at Dropbox should email me with an invitation to use their services. The concept appears simple enough—remote storage that makes users’ files available on any laptop, desktop, or phone.

A colleague apparently suggested that the nice people at Dropbox should email me with an invitation to use their services. The concept appears simple enough—remote storage that makes users’ files available on any laptop, desktop, or phone.

I was intrigued by it because it’s a discrete example of a “cloud” computing service. How do they handle some of the key privacy challenges? A cloud over remote computing and storage is the likelihood that governments will use it to discover private information with dubious legal justification, or without any at all. (Businesses likewise can rightly worry that competitors working with governments might access trade secrets.)

Well, it turns out they don’t handle these challenges. Dropbox is a privacy black box.

I homed right in on their “Policies” page, looking for assurance that they would protect the legal rights of users to control information placed in the care of their service. There’s precious little to be found.

There’s no promise that they would limit information they share with authorities to what is required by valid legal process. There’s no promise that they would notify users of a warrant or subpoena. They do reserve the right to monitor access and use of their site “to comply with applicable law or the order or requirement of a court, administrative agency or other governmental body.”

Is there protection in the fact that files are stored encrypted on their service? The site—though not the terms of service—says “All files stored on Dropbox servers are encrypted (AES-256) and are inaccessible without your account password.” Not if Dropbox is willing to monitor the use of the site on behalf of law enforcement. They can simply gather your password and hand it over.

National Security Letter authority and the impoverished “third party doctrine” in Fourth Amendment law puts cloud-user privacy on pretty weak footing. Dropbox’s policies do nothing to shore that up. It’s not alone, of course. It’s just a nice discrete example of how “the cloud” exposes your data to risks that local storage doesn’t.

There are a few other problems with it. They don’t promise to notify users directly of changes to the privacy policy. (“[W]e will notify you of any material changes by posting the new Privacy Policy on the Site…”) And they reserve the right to change their terms of service any time—without giving you the right to access and remove your files. When they decide to make their free service a paid service, they could hold your files hostage unless you sign up for

x years. Data liberation is an important term of services like this.

Golly, even as I’ve been writing this, friends have tweeted that they like Dropbox. It sounds like a fine service for what it is. I just wouldn’t put anything on there that you wanted to keep private or that you really wanted to be sure you could access.

At Berin’s suggesting, cross-posting from Cato@Liberty:

I’ve just gotten around to reading Orin Kerr’s fine paper “Applying the Fourth Amendment to the Internet: A General Approach.” Like most everything he writes on the topic of technology and privacy, it is thoughtful and worth reading. Here, from the abstract, are the main conclusions:

First, the traditional physical distinction between inside and outside should be replaced with the online distinction between content and non-content information. Second, courts should require a search warrant that is particularized to individuals rather than Internet accounts to collect the contents of protected Internet communications. These two principles point the way to a technology-neutral translation of the Fourth Amendment from physical space to cyberspace.

I’ll let folks read the full arguments to these conclusions in Orin’s own words, but I want to suggest a clarification and a tentative objection. The clarification is that, while I think the right level of particularity is, broadly speaking, the person rather than the account, search warrants should have to specify in advance either the accounts covered (a list of e-mail addresses) or the method of determining which accounts are covered (”such accounts as the ISP identifies as belonging to the target,” for instance). Since there’s often substantial uncertainty about who is actually behind a particular online identity, the discretion of the investigator in making that link should be constrained to the maximum practicable extent.

The objection is that there’s an important ambiguity in the physical-space “inside/outside” distinction, and how one interprets it matters a great deal for what the online content/non-content distinction amounts to. The crux of it is this: Several cases suggest that surveillance

conducted “outside” a protected space can nevertheless be surveillance of the “inside” of that space. The grandaddy in this line is, of course, Katz v. United States, which held that wiretaps and listening devices may constitute a “search” though they do not involve physical intrusion on private property. Kerr can accomodate this by noting that while this is surveillance “outside” physical space, it captures the “inside” of communication contents. But a greater difficulty is presented by another important case, Kyllo v. United States, with which Kerr deals rather too cursorily.

Continue reading →

The New York Times has a great summary of yesterday’s Exploring Privacy workshop at the FTC, where Adam and I made the case that restrictive, preemptive privacy regulations affecting online advertising is likely to harm, not help, consumers. Check out Adam’s excellent summary here. Adotas notes:

… the highlight was the third panel, when Jeff Chester, executive director for the Center for Digital Democracy and an outspoken online privacy advocate, and Berin Szoka, director of the Center for Internet Freedom at the Progress and Freedom Foundation, got into a 10-minute tete-a-tete on the importance of targeting in advertising as well as journalism.

[Jeff] Chester railed against targeting in general and called for a “citizen friendly” system while Szoka the importance of targeted advertising in funding high-cost content. Szoka argued that for users to access certain content at no cost, there is a trade-off in giving up certain types of data.

Jeff and I will be “taking our show on the road” Wednesday morning with a four-way debate moderated by Rob Atkinson of ITIF, also including Howard Beales and Ari Schwartz, as well as the FTC’s Peder McGee. Given the energy level in our discussion at the FTC, this more focused panel promises to be a great discussion of how to maximize the many competing values of consumers—or, more precisely (from my perspective, anyway), how to educate and empower users to make those decisions for themselves.

So don’t miss it if you can attend (1101 K Street, Suite 610, Washington, DC), and be sure to watch the live-streaming if you can’t!

Continue reading →

A colleague apparently suggested that the nice people at

A colleague apparently suggested that the nice people at  The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.