I’ve been floating around in conservative policy circles for 30 years and I have spent much of that time covering media policy and child safety issues. My time in conservative circles began in 1992 with a 9-year stint at the Heritage Foundation, where I launched the organization’s policy efforts on media regulation, the Internet, and digital technology. Meanwhile, my work on child safety has spanned 4 think tanks, multiple blue ribbon child safety commissions, countless essays, dozens of filings and testimonies, and even a multi-edition book.

During this three-decade run, I’ve tried my hardest to find balanced ways of addressing some of the legitimate concerns that many conservatives have about kids, media content, and online safety issues. Raising kids is the hardest job in the world. My daughter and son are now off at college, but the last twenty years of helping them figure out how to navigate the world and all the challenges it poses was filled with difficulties. This was especially true because my daughter and son faced completely different challenges when it came to media content and online interactions. Simply put, there is no one-size-fits-all playbook when it comes to raising kids or addressing concerns about healthy media interactions. Continue reading →

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

The Technology Liberation Front is the tech policy blog dedicated to keeping politicians' hands off the 'net and everything else related to technology.

Running List of My Research on AI, ML & Robotics Policy

by Adam Thierer on July 29, 2022 · 0 comments

[last updated 4/2/2024]

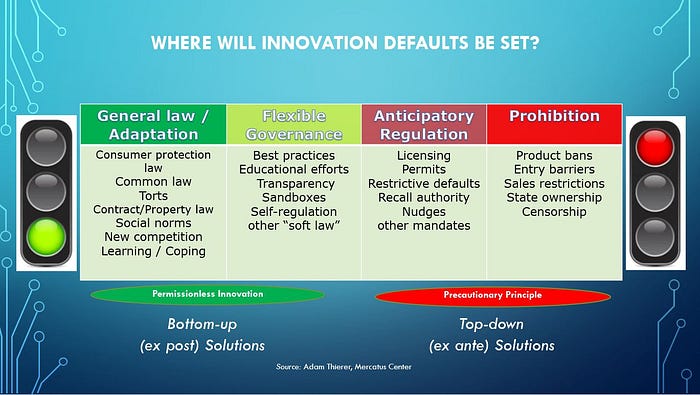

This a running list of all the essays and reports I’ve already rolled out on the governance of artificial intelligence (AI), machine learning (ML), and robotics. Why have I decided to spend so much time on this issue? Because this will become the most important technological revolution of our lifetimes. Every segment of the economy will be touched in some fashion by AI, ML, robotics, and the power of computational science. It should be equally clear that public policy will be radically transformed along the way.

Eventually, all policy will involve AI policy and computational considerations. As AI “eats the world,” it eats the world of public policy along with it. The stakes here are profound for individuals, economies, and nations. As a result, AI policy will be the most important technology policy fight of the next decade, and perhaps next quarter century. Those who are passionate about the freedom to innovate need to prepare to meet the challenge as proposals to regulate AI proliferate.

There are many socio-technical concerns surrounding algorithmic systems that deserve serious consideration and appropriate governance steps to ensure that these systems are beneficial to society. However, there is an equally compelling public interest in ensuring that AI innovations are developed and made widely available to help improve human well-being across many dimensions. And that’s the case that I’ll be dedicating my life to making in coming years.

Here’s the list of what I’ve done so far. I will continue to update this as new material is released: Continue reading →